Meta Finally Revealed The Truth About LLAMA 4

# Meta’s Llama 4 Release: Behind the Drama and Benchmarks

The recent release of Meta’s Llama 4 language model has been accompanied by controversy and questions about its actual capabilities versus its marketed performance. This blog post examines the situation and provides insights into what might be happening behind the scenes.

## The Missing Technical Paper

One of the first red flags was Meta releasing Llama 4 without a technical paper – an unusual move for a major AI model release. Without transparency into the model’s architecture, training methods, and techniques, some critics suspect Meta may have overfitted the model to perform well on benchmark tests but not in real-world applications.

## Internal Turmoil at Meta?

An anonymous post from someone claiming to be inside Meta suggested their AI team was in “panic mode” following the release of DeepSeek V3, a model from a relatively unknown Chinese company. According to this source:

– DeepSeek V3 was developed with just a $5.5 million training budget

– Meta’s leadership was concerned about justifying the enormous costs of their AI division

– The compensation for individual AI leaders at Meta often exceeds what it cost to train DeepSeek’s entire model

– The organization may have been artificially inflated with people wanting to join the high-profile AI team

## Benchmark Discrepancies

AI professor Ethan Molik identified apparent differences between the Llama 4 version used for benchmark testing and the one released to the public. His comparison of answers shows the benchmark version providing much more comprehensive responses than the publicly available model.

The controversy deepened when users noticed discrepancies between the “Llama 4 Maverick Experimental” version (possibly used for benchmarks) and the released “Llama 4 Maverick” model, with the experimental version consistently producing longer, more detailed responses.

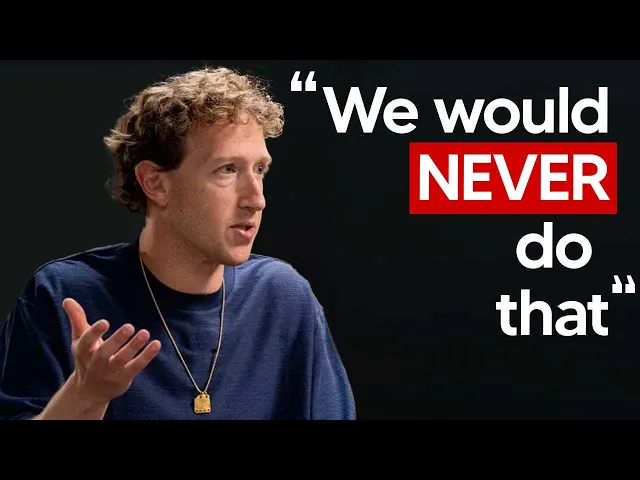

## Meta’s Response

Meta has acknowledged the reports of “mixed quality across different services” but attributed this to implementation issues rather than model performance:

– They denied training on test sets: “We simply would never do that”

– They stated that variable quality is due to needing to “stabilize implementations”

– They affirmed belief that Llama 4 represents “a significant advancement”

## Independent Analysis

Artificial Analysis

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...