The command line didn’t die. It was waiting.

The command line didn't die. It was waiting. What a year inside Claude Code taught me about where computing is actually going

There’s a moment every programmer remembers. Not when they learned to code — that’s a different memory, usually involving a textbook and a lot of frustration. I mean the moment when the terminal stopped feeling like a place you visited and started feeling like a place you lived.

For me, that moment happened twice. Once in my early twenties, bent over a keyboard writing Bash scripts, watching the Unix command line respond to me like a conversation. And then again, exactly one year ago, when I typed my first prompt into Claude Code and felt that same electricity — something on the other side understood what I was trying to do.

Today is Claude Code’s first birthday. Anthropic shipped it as a research preview a year ago. Since then, developers have used it to build weekend projects, ship production apps, write code at the world’s largest companies, and help plan a Mars rover drive. I’ve been along for nearly the whole ride. Here’s what I’ve learned.

The return of the terminal

I grew up in a terminal. For years, Bash scripts were my native language — the way some people think in English and dream in another tongue, I thought in Unix. Fast, precise, a little ruthless. Then entrepreneurship pulled me away from the command line. The terminal became something I visited occasionally, like a hometown you moved away from.

Claude Code brought me back.

What surprised me wasn’t the capability — I expected Claude to be smart. What surprised me was the purity of it. No drag-and-drop. No modal windows. No GUI standing between my intention and the machine’s action. Just a blinking cursor, a prompt, and an AI that understood context the way a good senior engineer understands a codebase they’ve lived in for years.

It just worked.

To understand what I mean, you have to go back to the nineties.

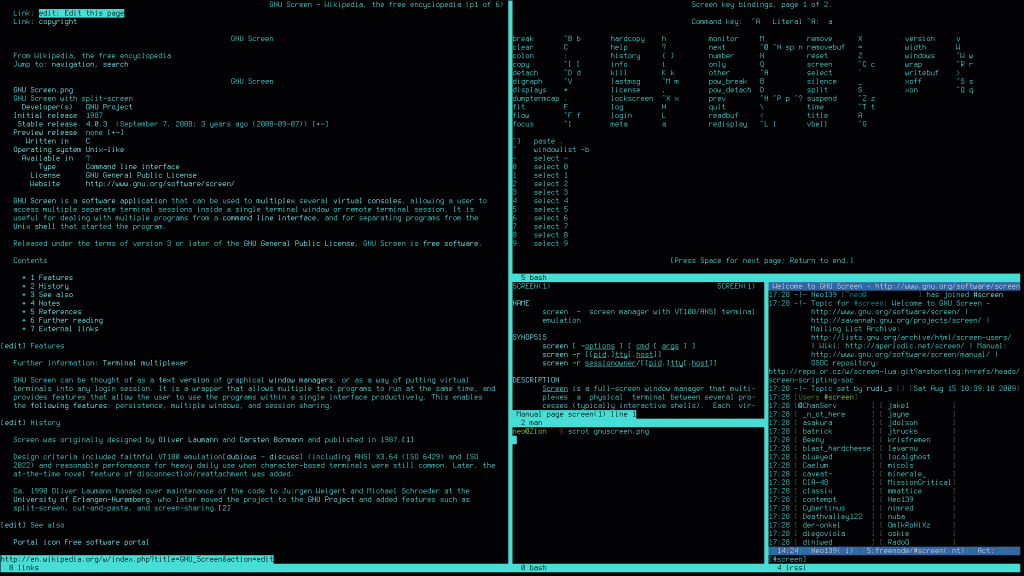

Before broadband, before Wi-Fi, before any of this, there was a dial-up modem and a program called GNU Screen. If you never used it, Screen was a terminal multiplexer — an unglamorous name for something almost magical. You’d dial in to a remote Unix server, and Screen let you run multiple virtual terminals inside that single fragile connection, all of them tunneled through the same 28.8k pipe humming in your wall. One window running a compile. One tailing logs on a different box. One mid-way through a Bash script you’d been nursing for days. All of them alive simultaneously, all running on borrowed time over a phone line that could drop without warning.

The real trick was detach and reattach. When your connection dropped — and it always dropped — Screen held your sessions open on the server side, patient and untouched. Processes kept running in the dark as if you’d never left. You’d reconnect, type screen -r, and there they were. Cursor exactly where you left it. The server hadn’t noticed you were gone.

I got obsessive about my Screen layouts. Every session had a name, a purpose, a position. I’d tile them across a large terminal window with a kind of deliberate craft — this pane for the live task, that one watching system logs, another tunneled into a completely different machine across the country. It was specialized, almost ceremonial. A way of working that was also a way of thinking. The terminal arranged like a cockpit, each window a different instrument.

That was the mid-nineties. The world ran on phone lines and patience.

Today I run Warp as my terminal — built for this era the way Screen was built for that one — with five split windows open, each running its own Claude Code session. The modem is gone. The dropped connections are gone. The cold sweat of watching a compile die mid-run because someone picked up the landline is gone. But the essential geometry of it — that instinct to tile your context across a single screen, to tend each pane like a separate thread of attention — that’s the same muscle memory, firing thirty years later like it never stopped.

The difference is what’s on the other side now. Back then, each pane reached a server. Today each pane reaches something that talks back. Five parallel conversations with a machine that never gets tired, never gets territorial, and never takes feedback personally.

Naval Ravikant put it plainly: “The computer is tireless. The computer is egoless, and it’ll just keep working. It’ll take feedback without getting offended.” That’s not a product feature. That’s a different kind of working relationship than any I’ve had before.

Learning to marshal agents

The first few months, I kept things simple. One session, one task, one thread of conversation. That’s the trap most new Claude Code users fall into — they treat it like a very smart search engine with a keyboard.

Claude Code is a system, not a session.

Boris Cherny, who built Claude Code at Anthropic, shared his own workflow earlier this year, and what struck me wasn’t how exotic it was — it was how deliberate. He runs five Claude instances locally in his terminal and another five to ten in his browser. He starts sessions from his phone in the morning and checks in on them at night, like a manager doing rounds on a team that never clocks out. Every common workflow in his inner loop lives in a slash command, committed to git in .claude/commands/, so Claude can use those workflows too.

That reframing — from user to manager — changed how I work.

I’ve built several /agents of my own now. Some simplify code after a heavy session. Others verify that what Claude built actually does what I asked. I have harnesses I trigger when I need to run structured tests. But I’ll be honest: I still catch myself slipping back into simple, linear mode. There’s a gravitational pull toward the one-on-one conversation. The multi-agent orchestration is where the leverage lives, but it takes discipline to stay there.

Cherny’s team has a subagent called code-simplifier that strips back the sprawl after Claude finishes a task. They have verify-app, which runs end-to-end tests before anything ships. These aren’t exotic configurations. They’re a good senior engineer’s checklist, automated and made persistent.

Plan mode: the question before the answer

The biggest workflow change in my first year wasn’t a command or a configuration. It was learning to slow down before Claude speeds up.

Cherny starts every serious task in Plan Mode — iterating on the plan until it’s solid, then switching to auto-accept and letting Claude execute. AI works best when it commits to a structured sequence: what to do, in what order, and why. Plan Mode forces that before a single line of code is written.

I’ve taken this further than most people probably do, and I’m not apologetic about it.

Before I write a single line of implementation, I’ll spend a full hour — sometimes more — in Plan Mode. Not skimming it. Not treating it like a formality before the real work starts. An actual hour of back and forth, sharpening the spec, narrowing the scope, stress-testing the approach. By the end I have a spec.md file I know I want. Not a rough outline. A real document — every section argued over, every assumption surfaced, every edge case named.

That spec.md is the contract. I make sure Claude Code knows it exists and treats it as the source of truth. Without it, the agentic loop fills gaps with reasonable-sounding assumptions that compound into large drift. With a locked spec, Claude has something to hold itself against. It can push back when my mid-session instructions start wandering from what we agreed. It doesn’t let me half-bake things.

That last part took me a while to appreciate. The instinct when you’re moving fast is to wave Claude forward and course-correct later. But half-baked work in an agentic system doesn’t stay contained — it propagates. A vague spec produces vague code produces a vague app. Garbage in, garbage out, just at higher speed. The hour I spend in Plan Mode is the most leveraged hour in any project. Everything downstream runs cleaner because of it.

My favorite version of this is something I stumbled into on my own. I’ll hand Claude Code a pile of data — a CSV, a folder of notes, a set of logs — and ask it to ask me what I want to do with it. Claude already knows the general shape of what I’m working on. So instead of me describing the app, Claude builds a CLI question-and-answer flow. It interviews me. Surfaces options I hadn’t thought of. We spar over the plan until it hardens into something I’d actually commit to. Then we build.

It’s the difference between walking into a tailor and saying “I need a suit” versus having the tailor pull up your measurements, study your posture, and ask questions you didn’t know to ask — before a single piece of fabric gets cut.

The agentic loop and the joy of not being blocked

There’s a rhythm to Claude Code I’ve come to love. You start a task, describe the shape of it, share some context. Claude asks a clarifying question or two. Then the agentic loop begins — and you can step away.

When I come back, there are results to review. Not a half-finished answer waiting for my next instruction. Actual output: code written, tested, edge cases handled, dependencies installed. The loop powers through the work the way a good contractor powers through a renovation while you’re at the office. You come home and something has changed.

This is what Naval was pointing at when he said vibe coding takes people “from idea space, and opinion space, and from taste directly into product.” The gap between having an idea and having a working thing has compressed in a way that’s hard to describe until you’ve experienced it. I don’t manage the implementation anymore. I manage the direction.

And Anthropic keeps making the CLI nicer. Hooks for post-tool formatting, cleaner output, --teleport for moving sessions between local and remote. The tool gets better at the same pace I’m getting better at using it.

The tsunami Naval sees coming

Naval’s recent observation about what happens next is worth sitting with. He’s not describing a better IDE. He’s describing a shift in who gets to make things.

“Just like now anybody can make a video or anyone can make a podcast, anyone can now make an application,” he said. “So we should expect to see a tsunami of applications.”

He’s right — and one year in, I can see it from the inside. Claude Code grew to a billion-dollar product in six months. Developers who once needed teams to build production applications are shipping solo. Boris Cherny wrote that all of his contributions to Claude Code in December 2025 were written using Claude Code and Opus 4.5. The tool building itself with itself.

Naval’s more interesting point is about what happens when the tsunami arrives. Not all of it survives. The best application for any given use case still tends to win its category. What changes is that more niches get filled — the lunar phase tracker, the very specific health log, the nostalgic game that was never big enough to justify an engineer’s time. These things exist now. The market for them was always there. The means to build them wasn’t.

Here’s what strikes me most, though. It isn’t just that more applications are coming. It’s where we’re building them from.

Computing started in a terminal. The command line was the original interface — clean, direct, unmediated. You typed, the machine responded. No menus, no icons, no abstraction softening the conversation between human and computer. It was demanding and it was pure. The GUI arrived in the eighties and nineties and opened computing to everyone who wasn’t comfortable in that rawness, which turned out to be almost everyone. The terminal got pushed to the edge. It became the province of sysadmins and Unix greybeards, a place most people passed through reluctantly on their way to something with a mouse.

Four or five decades of graphical computing — the Mac, Windows, the web browser, the smartphone, the app store — all of it accumulating toward something. We just didn’t know what. It turns out it was building toward the moment when natural language became a programming interface, and the most powerful place to use it was back in the terminal.

We have come full circle. And the circle isn’t closing — it’s compounding.

The CLI that birthed computing is now the cockpit for the most capable AI systems ever built. The Bash-fluent instincts that made someone dangerous in 1992 make them devastating in 2026, because those instincts — composability, scripting, piping one process into another, thinking in systems rather than applications — are exactly what agentic AI rewards. The hackers who never left the terminal are suddenly at the center of the biggest shift in software since the web. The people who are returning to it, as I did, are finding that everything they learned there still applies. It was never wasted.

This is a recursive loop, each pass more powerful than the last. Terminal → GUI → Web → Mobile → and now back to the terminal, except this time the terminal talks. It plans. It executes. It checks its own work and keeps going while you sleep.

I’ve never felt luckier to be curious. There has never been a better time to be the kind of person who wants to pull a system apart and see what’s inside — who treats a blinking cursor not as an obstacle but as an invitation. The tools have finally caught up to the instinct.

I think about that every time I open Warp and see my five split windows glowing. Each one is a conversation. Each conversation is a possibility. A year ago, I was a person who used to code. Now I’m something harder to name — part engineer, part manager, part editor — working alongside something tireless, egoless, and genuinely getting better every week.

If you grew up in a terminal, welcome back. If you’re arriving for the first time, you picked the right moment.

Happy birthday, Claude Code.

Let’s see what we build next.

Recent Blog Posts

Claw-code Broke GitHub’s Star Record in 24 Hours. Two Engineers Did It on an Airplane. Here’s What That Means for Your Business.

Here's the number: 100,000. That's how many GitHub stars a repository called claw-code collected in roughly 24 hours. Not a year. Not a month. One day. By the time a live stream was done discussing it, the counter was climbing by a thousand stars every ten minutes. Nobody in the room could remember seeing anything grow that fast. Because nothing had. I watched it happen in real time. I'd met the two engineers behind it the weekend before at an AI hackathon in San Francisco. Within 72 hours of shaking hands, they'd built the fastest-growing repo in GitHub history —...

Mar 15, 2026Elon Musk Doesn’t Run Six Companies. He Runs One Router.

On Wednesday morning, Andrej Karpathy — the man who taught a generation of engineers to build neural networks — told everyone to stop writing code. Manage the agents that write it, he said. The guy who wrote the playbook just rewrote it. We covered the implications in our Signal/Noise briefing: who builds the Cisco for agents, what happens when agents get wallets, why the Fastenal vending machine is the best metaphor for the AI economy. But the more we pulled on the thread, the more a different question emerged. Not about code. Not about models. About organizations. Specifically: what does a...

Mar 3, 2026Stop Boarding Up the Windows. The Tsunami Is Coming.

There's a popular narrative about AI and jobs right now. It goes something like this: AI is coming for your job. Companies are laying people off. The robots are winning. It's not wrong, exactly. But it's dangerously incomplete — like watching a hurricane through your living room window and thinking the problem is the wind. When a hurricane hits, the first thing you notice is the wind. Trees bending, debris flying, power lines snapping. It's dramatic and visible and it's what every camera crew points at. Then comes the rain — relentless, overwhelming, the kind that makes you question every...