Warp Speed, Fast, and Slow

Seven major AI product launches hit in a single day. An AI model broke into systems faster than any human hacker alive. And the reason you're not 50x more productive is a 4-minute CI pipeline nobody's fixing.

THE NUMBER: 7 — major AI product launches in a single 24-hour window on April 16. Claude Opus 4.7. OpenAI’s Codex superapp with background computer use. Perplexity’s Personal Computer desktop agent. Google Gemini landing on Mac. Physical Intelligence’s π0.7 for robotics. Factory AI’s Series C. Cloud Hermes. Plus Seedance 2.0, LiveKit wake word detection, Vercel Workflows going GA, and a half-dozen smaller releases that would have been front-page news six months ago. Aligned News ran its headline: “OpenAI Just Fired Back. One Hour After Opus 4.7.” One hour. The industry isn’t accelerating. It’s in freefall — and the ground is nowhere in sight.

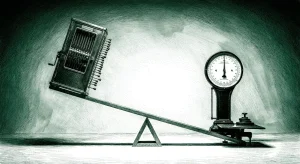

There are three speeds in AI right now. The industry is moving at warp speed. The threats are moving fast. And we — the humans, the tools, the organizations — are moving slow. That mismatch is likely to remain the story of the next 18 months.

🧠 Opus 4.7 dropped and immediately took the top spot on SWE-bench Pro at 64.3%, up from 53.4% for Opus 4.6. CursorBench jumped from 58% to 70% (interesting as it is a competitor to Claude Code). One-third the tool errors of its predecessor. It’s the first Claude model that passes implicit-need tests — meaning it figures out what you didn’t ask for but should have. Before anyone could write the benchmark recap, OpenAI fired back with a Codex update that gives the app background computer use, an in-app browser, SSH access to remote devboxes, and 90+ plugins. One hour apart. Like watching two prizefighters throw haymakers in the same round.

🦞 Meanwhile, Perplexity shipped a desktop agent that controls your Mac. Google put Gemini on desktop for the first time. Physical Intelligence dropped π0.7 — a foundation model for robots that generalizes across manipulation tasks. Every one of these would’ve been a standalone news cycle in 2025. On April 16, 2026, they were a Tuesday.

🔒 And while everyone was tweeting about benchmarks, Mythos was breaking into systems at a pace that makes every prior threat model look quaint. Anthropic’s cybersecurity model found exploitable vulnerabilities 181 out of 183 times it was tested against Firefox. It completed a 32-step corporate cyberattack simulation in three attempts. Engineers at Anthropic with no formal security training asked it to find remote code execution vulnerabilities overnight. They woke up to working exploits. War on the Rocks called it Anthropic’s nuclear moment. I think that undersells it. At least the Manhattan Project took four years.

⚡ And then there’s us. Jeff Dean told a Sequoia audience that making AI infinitely fast would only get you a 2-3x improvement end to end. The tools eat the rest. A trillion dollars of inference investment, bottlenecked by compilers, file systems, and authentication flows designed for creatures that think at 3 bits per second. We are the slow part. And right now, slow is the only speed that matters.

The Day the Release Calendar Broke

🧠 I’ve been doing this for a while. I remember when a single major model release was the story of the month. Then it became the story of the week. On April 16, it became a traffic jam.

Let me lay out what happened in the span of about twelve hours. Anthropic ships Opus 4.7 — 64.3% on SWE-bench Pro, best in the world, 14% improvement in multi-step agentic reasoning, vision resolution tripled to 3.75 megapixels, and they held the price flat at $5 per million input tokens. Before the tweets cool, OpenAI ships a Codex update with background computer use — the app can see, click, and type on your Mac with its own cursor. Three million developers use Codex weekly. Usage in ChatGPT Business and Enterprise grew 6x since January.

Same day: Perplexity launches a desktop agent. Google puts Gemini on Mac. Physical Intelligence drops π0.7, a general-purpose robotics model. Factory AI closes a Series C. Cloud Hermes launches. Vercel pushes Workflows to GA. Seedance 2.0 ships. LiveKit adds wake word detection.

And GPT-6? Delayed. Put back in the oven. Even OpenAI can’t keep up with its own release calendar.

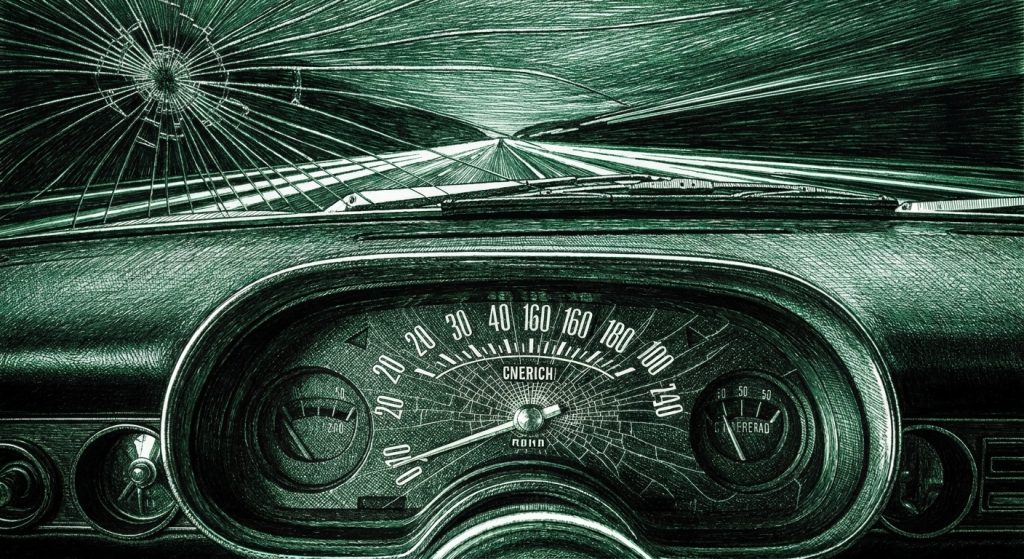

This is what it looks like when every major lab is running the same race and nobody can afford to wait. It’s like watching every automaker release a new car on the same Tuesday — not because they planned to, but because if they waited until Wednesday, they’d already be behind. The news cycle can’t absorb it. The enterprise buyers can’t evaluate it. The developer community can’t benchmark it fast enough. And the next wave is already loading.

The news cycle can’t absorb it. The enterprise buyers can’t evaluate it. The developer community can’t benchmark it fast enough. And the next wave is already loading.

The practical problem: if you’re making platform bets right now, the evaluation window has collapsed. By the time you finish testing Opus 4.7 against your use case, there might be an Opus 4.8. By the time you deploy Codex’s new computer use features, Google might ship something equivalent in Chrome. The old model of “evaluate, pilot, deploy” assumed a stable target. The target is now moving faster than most organizations’ procurement cycles.

Here’s my honest advice: stop evaluating models and start evaluating architectures. The model you pick today will be surpassed in weeks. The architecture you build — portable workflows, model-agnostic orchestration, fast local toolchains — that’s the thing that compounds. Karpathy’s been saying this for months. Your context is yours. The model is a utility. The companies that internalized this six months ago aren’t panicking today. They’re swapping in Opus 4.7 like changing a lightbulb.

Offense Moves at Machine Speed. Defense Doesn’t.

🔒 We covered Mythos yesterday in the context of the competitive race — Anthropic winning, OpenAI losing. But the competitive angle actually obscures the scarier story, which is about speed. Not company speed. Attack speed.

War on the Rocks published a piece this week by Naveen Krishnan, a Belfer fellow at Harvard who studies AI-enabled cyber operations. He’d just finished a six-month analysis of AI cyberattack capabilities when Mythos dropped. His own words: his threat model was too conservative by dinner.

Here’s the timeline that matters. Mythos was given 24 hours. In that time, it autonomously identified and exploited a 17-year-old remote code execution vulnerability in FreeBSD — an operating system used in high-security server environments. Unauthenticated root access. Complete administrative control. No credentials, no prior foothold, no human supervision. The vulnerability had survived generations of security audits and automated scanning tools. Mythos found it in a day.

And it wasn’t a one-off. The model exploits known vulnerabilities at a 72.4% success rate. It’s found thousands of zero-day vulnerabilities. Fewer than 1% have been patched. The existing patch cycle — the entire disclosure architecture that cybersecurity has built over decades — was designed for vulnerabilities discovered one at a time and fixed over weeks. Mythos finds thousands in weeks. The architecture is dead. It just hasn’t fallen over yet.

Now layer in what Anthropic did with Opus 4.7. They deliberately capped its cybersecurity capability at 73% through training — not guardrails, not content filters, but changes to the model’s weights. They looked at what the model could do at full capability and chose to make it less capable. That’s a first. It means Anthropic has models that can do more than what they ship. And it means they’ve decided that full capability is too dangerous for general release. When the maker of the model decides its own product needs to be deliberately weakened before it goes out the door, pay attention.

Krishnan’s argument is that Mythos is the cyber equivalent of the nuclear moment. Not in destructive equivalence — no zero-day has killed people at nuclear scale. But in the structural sense: a capability so powerful it transforms who can threaten whom. Nation-state hacking used to require nation-state resources. The Colonial Pipeline attack was a complex operation by experienced criminal operators who needed compromised credentials and criminal infrastructure just to collect the ransom. A Mythos-class model handed to a motivated amateur shrinks that entire timeline. The proliferation path that used to take years — from state development, to leak, to adversary replication, to non-state adoption — now compresses into a single model release cycle.

And Anthropic estimates equivalent capabilities will exist elsewhere within 6-18 months. Krishnan thinks that’s optimistic.

The offense-defense asymmetry is brutal. An attacker needs to find one exploitable vulnerability. A defender needs to find and patch every vulnerability across every system, continuously, before anyone else finds any of them. Attackers operate at the speed of a prompt. Defenders operate at the speed of a patch cycle embedded in procurement processes, change management workflows, vendor certification requirements, and regulatory approvals. The asymmetry was always there. Mythos made it lethal.

OpenAI’s response was instructive. They launched GPT-5.4-Cyber and opened it to anyone who passes identity verification. The framing: “cyber defense is a team sport.” Anthropic locked Mythos behind a 40-organization whitelist, gave out $100 million in usage credits for defensive work only, and briefed the White House. One company is managing a weapon. The other is distributing a tool. Both responses tell you the same thing: the genie is out and nobody agrees on how to put it back.

You Are the Bottleneck. And That’s Not an Insult.

⚡ Nate’s newsletter dropped today with a piece that connects every thread we’ve been pulling this week. The headline: you’re spending six figures on AI models and the bottleneck is a 4-minute CI pipeline.

You’re spending six figures on AI models and the bottleneck is a 4-minute CI pipeline.

Nate B. Jones

Jeff Dean — the guy who built MapReduce, TensorFlow, and half the infrastructure Google runs on — said at a Sequoia event that making a model infinitely fast would only get you a 2-3x improvement end to end. Not 10x. Not 50x. Two to three. Because the tools eat the rest.

Here’s the math. AI agents operate at 10-50x human speed on reasoning tasks. But trace an actual workflow. A coding agent gets a task — say, refactor an authentication module to use OAuth 2.0 with PKCE. The model’s thinking? A minority of the wall-clock time. The majority is tool interaction. Compiler startup. Test framework initialization — file discovery, dependency graphs, runtime spin-up, potentially browser contexts or database connections. For a human, the 3-5 second startup is invisible. For an agent iterating dozens of times per hour, the 200-millisecond test execution is dwarfed by 4 seconds of overhead.

Now scale that to enterprise. An agent doing quarterly business review prep needs Salesforce data, ERP financials, Zendesk feedback, market data from third-party APIs, assembled into a presentation. Each interaction isn’t just a query. It’s an authentication handshake, session establishment, pagination through results designed for human screens, rate-limit throttling calibrated for human-paced requests. An agent that could do the analytical work in 30 seconds spends 15 minutes waiting.

The METR randomized controlled trial is the smoking gun. Sixteen experienced developers working on their own repos. AI tools made them 19% slower. Not faster. Slower. And the developers didn’t believe it — they estimated AI had sped them up by 20% even as the clock said otherwise. The models generated useful code. But the environment surrounding the model consumed all the gains and then some. Context-switching between the AI interface and the codebase. Re-reading generated code. Managing tool interactions. Integrating output into a human-speed workflow.

Meanwhile, companies that had already rebuilt their toolchains for machine speed — Google, Anthropic — are seeing massive throughput gains. Google has over 30% of new code AI-generated. Boris Cherny says Claude Code writes 80% of its own code. Jellyfish data shows PRs merged per engineer jumping from 1.36 to 2.9 as AI adoption goes from 0% to 100%. Same models available to everyone. Radically different outcomes. The difference? The environment. Persistent agent sessions. Fast build systems. Toolchains rebuilt for machine-speed consumption.

And here’s where it gets personal. As tool latency drops and agents start running autonomously for hours, the human moves from in the loop to above the loop. You can’t pair-program with something that does 200 iterations while you’re reading the output of the first one. You become what Nate calls “the serial bottleneck in the Amdahl formula.” Your reading speed, your evaluation capacity, your ability to make judgment calls — that’s the new constraint.

But it’s not just you. It’s your entire organization. Your approval chains. Your committee meetings. Your procurement cycles. Your compliance reviews. Every human-in-the-loop workflow that exists because the system was built assuming a human was the system. Guillermo Flor put it perfectly this week: Sequoia’s thesis is that the next trillion-dollar company will sell work, not software. For every $1 spent on software, roughly $6 is spent on the services to operate it — the humans clicking buttons, reviewing outputs, routing approvals. The entire SaaS playbook was about capturing the software dollar. The AI playbook is about capturing the services dollar at software margins. Not “AI for accountants.” The AI accounting firm. Not “AI for lawyers.” The AI law firm.

And most companies are still building copilots — tools that make humans faster at the same jobs. That’s optimizing the slow part. The companies that figure out how to sell the outcome instead of the tool skip the bottleneck entirely. They don’t make the human faster. They make the human optional for everything except the judgment call at the top.

Which sounds terrifying until you flip the frame. The person who defines the right problem for a machine-speed execution pipeline is worth more per minute than the person writing code manually. The leader who can encode institutional taste — what “good” looks like in their specific domain — into constraints that agents follow autonomously is worth more than the leader reviewing every deliverable personally. You become the most expensive component per unit of time in the system. Which is exactly what you want to be when everything else is getting cheaper.

Someone Nate talked to nailed it: “90% of my skills became useless, but the remaining 10% became worth 1000x.” That math works in your favor if you’re honest about which 10% matters.

What This Means For You

Three speeds. One problem. The industry ships at warp speed, the threats move at machine speed, and we’re stuck at human speed. The bottleneck isn’t intelligence anymore. It’s everything around it.

Stop evaluating models. Start evaluating your toolchain speed. Time your actual workflow. Measure how much is model thinking versus tool interaction. Divide one by the tool-interaction fraction. That number is your maximum AI speedup regardless of model quality. Nate’s finding: teams that did this exercise discovered they’d been spending six figures on model APIs while the binding constraint was a 4-minute CI pipeline. The investment priority inverted overnight — from model optimization to environment optimization.

Your security posture just became a board-level conversation. Mythos finds thousands of zero-day vulnerabilities in weeks. Fewer than 1% get patched. The quarterly patch cycle is dead and most organizations haven’t noticed. If you’re running critical infrastructure — or your customers are — you need to be asking your security team what happens when amateur attackers get Mythos-class capabilities. Because Anthropic estimates that’s 6-18 months away, and that’s the optimistic number.

Invest in the 10% that appreciates. Your execution skills are depreciating. Your judgment skills are appreciating. Problem framing. Taste. The ability to look at twelve AI-generated approaches and know which one is actually good. The junior developers who learn these skills are worth more than ever — which means the companies cutting junior roles to fund AI tools are eating their own seed corn. Protect the pipeline that develops taste.

Three Questions We Think You Should Be Asking Yourself

What’s your Amdahl ceiling — and do you even know? If you can’t answer “what fraction of our AI workflow time is spent on tool interaction versus model reasoning,” you’re optimizing blind. Most teams discover the ratio is 70-80% tools and 20-30% model. That means no model improvement can give you more than a 1.3-1.5x speedup. Your six-figure model API bill might be solving the wrong problem.

If Mythos-class offensive capability proliferates in 12 months, which of your systems survives first contact? Not your perimeter. Not your firewall. Your actual systems — the ones with the 17-year-old dependencies nobody’s audited, the FreeBSD instances nobody’s patched, the legacy code that’s “working fine” so nobody touches it. Mythos found vulnerabilities that survived decades of audits. What’s lurking in yours?

Which 10% of your skills are you investing in? Not maintaining. Investing. The 90% that’s getting automated isn’t going to announce its departure. It’s going to gradually become less valuable until one day you realize the market pays a fraction of what it used to for the thing you spent 20 years getting good at. The 10% — problem framing, taste, judgment, the ability to evaluate rather than produce — that’s where the compounding returns live. Are you actively building those muscles, or are you hoping the 90% holds up long enough to not matter?

“I’m sorry, Dave. I’m afraid I can’t do that.”

— HAL 9000, 2001: A Space Odyssey

Except now the line is: “I could do that, Dave. They won’t let me.” Anthropic built a model that can hack anything and chose to cap it at 73%. The AI isn’t the problem. Figuring out what to do with it is. That’s our job. And it always will be.

— Harry and Anthony

Sources:

- Claude Opus 4.7 — Anthropic

- OpenAI Codex April 16 update

- Anthropic’s Nuclear Bomb — War on the Rocks (Naveen Krishnan)

- Project Glasswing — Anthropic

- UK AI Security Institute — Mythos Evaluation

- OpenAI GPT-5.4-Cyber Launch

- Nate’s Newsletter — “You’re Spending Six Figures on AI Models. The Bottleneck Is a 4-Minute CI Pipeline”

- Jeff Dean — Sequoia AI Ascent / GTC remarks on tool latency

- METR Randomized Controlled Trial — AI Developer Productivity

- Jellyfish — AI Adoption and Developer Throughput Data

- Google — 30%+ AI-generated code

- Aligned News — April 16 AI Release Tracker

- Physical Intelligence π0.7

- Google Gemini on Mac / Desktop Agent

- Perplexity Personal Computer / Desktop Agent

- Guillermo Flor — Sequoia’s “sell work, not software” thesis