OpenAI Deleted ‘Safely.’ NVIDIA Reports. Karpathy Is Still Learning

THE NUMBER: 6 — times OpenAI changed its mission in 9 years. The most recent edit deleted one word: safely.

TL;DR

Andrej Karpathy — the engineer who wrote the curriculum that trained a generation of developers, ran AI at Tesla, and helped found OpenAI — posted in December that he’s never felt so behind as a programmer. Fourteen million people saw it. Tonight, NVIDIA reports Q4 fiscal 2026 earnings after market close: analysts expect $65.7 billion in revenue, up 67% year over year. The numbers will almost certainly land. What matters is what Jensen Huang says about the next two quarters to a room full of people who’ve started wondering if the AI capex boom is built on solid ground. And this week, Sam Altman flew to India and called AI water usage concerns “completely untrue, totally insane,” while OpenAI quietly completed a for-profit restructuring that removed the word “safely” from its mission statement for the first time in nine years.

The pattern: the AI industry spent two years making promises. This week the receipts are coming due simultaneously. Karpathy is holding himself accountable. The market is holding Jensen accountable. Nobody is holding Altman accountable, which is its own kind of answer. The action: pull your OpenAI contracts before Thursday. Those representations were made before the mission changed.

Fourteen million people stopped scrolling when Andrej Karpathy admitted, in December, that he’s never felt this behind as a programmer. Not a worried CTO. Not a developer three years out of school. Karpathy. The man who wrote the neural net curriculum at Stanford, ran AI at Tesla, helped found OpenAI, and built the educational framework that put modern deep learning in every developer’s hands. He said the profession is being “dramatically refactored,” that he could be 10x more productive if he figured out how to string together what’s available, and that failing to do so “feels like a skill issue.” Then he listed what he hasn’t mastered yet: agents, subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, workflow integrations, and the mental model required to reason about probabilistic systems that don’t behave the same way twice. He’s not describing a productivity gap. He’s describing a new kind of engineering, and saying he’s still learning it.

Tonight after market close, Jensen Huang walks into an earnings call carrying $650 billion of other people’s expectations. NVIDIA reports Q4: $65.7 billion in revenue expected, up 67%, $1.53 a share, up 72%. NVIDIA has beaten estimates eight quarters in a row. The record isn’t the story. The story is that 89% of NVIDIA’s revenue comes from a single customer category (hyperscaler data centers), and Wall Street is newly, nervously wondering whether those customers will keep spending at the pace they promised. Last quarter, Huang called Blackwell demand “off the charts” and said cloud GPUs were “sold out.” Tonight he has to get back on stage and mean it again. The call is at 5 PM.

Meanwhile, Sam Altman flew to India and called AI water usage concerns “completely untrue, totally insane” with “no connection to reality.” He compared training an AI to raising a human child. (“It takes 20 years of life and all the food you eat.”) He didn’t mention, from the stage or anywhere else, that OpenAI had just removed the word “safely” from its mission statement. Six mission statements in nine years. This is the sixth. And it’s the first one that doesn’t include the company’s original self-imposed constraint.

These are three flavors of the same problem. The AI industry spent two years making promises: to developers, to investors, to enterprise buyers, to regulators. What it could do. How fast it would grow. What it would never become. This week, the receipts are coming due at once.

Karpathy feels behind. That’s not his problem. It’s yours.

I’ve never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become available.”

— @karpathy, December 2025

Two months after Karpathy posted that, it’s still moving through group chats, board prep emails, and engineering stand-ups. Fourteen million views is a number. The reason it keeps circulating is who said it.

I’ve shipped through four major platform shifts in this industry. The engineers who caught them early always described the same thing: a moment of vertigo right before it became advantage. Assembly to C. Mainframe to client-server. The web. Mobile. Every time, there was a window where some developers felt the ground move and others kept writing the old way. What Karpathy is describing is that window, except this time it opened in about 90 days and the new ground is genuinely strange.

He’s precise about why. It’s not just new tools. The new abstraction layer includes agents and subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, and workflow integrations, and you have to build a mental model for systems that are “fundamentally stochastic, fallible, unintelligible and changing entities suddenly intermingled with traditional engineering.” Not systems you debug by tracing execution. Not systems that behave the same way twice. Probabilistic collaborators with failure modes you learn by working with them, not by reading documentation. That’s a different cognitive skill than everything that came before it, and Karpathy, who literally wrote the curriculum, is saying he’s still building that skill.

Here’s the number that matters for enterprise teams: if Karpathy has access to every model, every environment, every paper, and still estimates he could be 10x more effective, what’s the multiplier for your senior engineers? Working with tools they’ve had for less than a year, under production pressure, without protected time to experiment? The gap between “we’ve deployed AI tools” and “our team is effective with AI” isn’t a tooling gap. It’s a mastery gap. And that gap is widening every week that the tools improve and your people don’t.

The companies that figure this out first aren’t just faster. They’re working on problems that used to require a team of ten. That’s not an efficiency gain. It’s a different business.

REALITY CHECKKarpathy’s post is confirmed on X, full text and engagement intact. | Implied: if the person who designed modern neural net curricula is publicly naming a mastery gap, the gap inside enterprise engineering teams is larger, not smaller. | What could go wrong: the “10x productivity” frame could set expectations that lead to agent deployments before teams have the judgment to supervise them, which is a faster way to fail than not deploying at all.

Key takeaway: Stop counting Copilot seats. Find the person on your team who has put in 40+ deliberate hours orchestrating agents and knows what they don’t know yet. If you can’t name them, you’re not behind Karpathy. You’re behind everyone who can.

- Andrej Karpathy: “I’ve never felt this much behind as a programmer” — X

- Karpathy’s Thread Signals AI-Driven Development Breakpoint — Futurum

- Even AI Experts Feel Behind — Medium

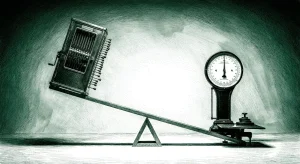

$65.7 billion. But that’s not the number Jensen Huang needs to deliver tonight.

NVIDIA reports after market close. Analysts have been precise: $65.7 billion in revenue, up 67% year over year. $1.53 per share, up nearly 72%. Eight consecutive quarters of beating estimates, average upside surprise of 7.46%. By every historical measure, this should be another clean night for Jensen Huang.

It won’t feel that way.

CNBC’s headline going into tonight was “Nvidia earnings report collides with Wall Street skepticism over AI spending.” The skepticism isn’t about Q4. It’s about what comes after. Alphabet, Amazon, Meta, and Microsoft have committed to $650 billion combined in AI infrastructure spending this year, up from $410 billion in 2025. That river is what NVIDIA has been swimming in. The question hanging over tonight’s call is whether the river keeps flowing, because if it doesn’t, there’s nowhere else for NVIDIA to go.

That last point is the one almost nobody is saying plainly: 89% of NVIDIA’s revenue came from data centers last quarter. There is no meaningful fallback. No consumer hardware line that absorbs the shock. No gaming segment that carries the quarter. NVIDIA is a single-category company now. The category is AI infrastructure. The customers are a handful of hyperscalers who all just made enormous capital commitments they could, in theory, slow. That’s the actual risk Huang is walking into tonight, and the Q4 numbers, however clean, don’t address it. Only his words do.

Two things happened since January that put the doubt there. DeepSeek’s R1 showed that competitive reasoning models could be trained at dramatically lower cost than U.S. frontier models. That raised a real question about whether the capex cycle needed to run at its current pace. Then Meta signed a $100 billion, five-year AMD partnership for 6 gigawatts of GPU deployment, the first major non-NVIDIA AI hardware commitment at hyperscaler scale. The AMD deal doesn’t break NVIDIA. But it’s the first hard evidence that the big buyers are engineering alternatives. Every other hyperscaler saw that announcement and started running RFPs.

Huang needs three things from this call. Guidance that holds the narrative: any miss on forward revenue, even against a clean Q4, gets read as the first crack and reprices the whole category. Blackwell execution, not just demand. “Sold out” is a supply claim. Shipping at scale is the execution claim, and those are different things. And something credible on China, now that H200 chips are back on the menu after the Trump administration cleared the export restriction. New upside revenue comes with geopolitical risk that enterprise buyers are watching carefully.

One more thing nobody’s putting in the earnings preview: six gigawatts of AMD GPUs for Meta alone requires the power output of roughly six nuclear reactors. This stopped being a chip story. It became an energy infrastructure story. Watch whether any analyst on tonight’s call asks Huang about the power supply chain. If they don’t, the analysis is still incomplete.

REALITY CHECKQ4 consensus figures confirmed across multiple analyst sources. 89% data center revenue figure from Q2 FY2026 NVIDIA filing. | Implied: a clean beat plus confident Q1-Q2 guidance would validate the AMD deal as healthy competition rather than a warning sign. | What could go wrong: Blackwell production constraints could force guidance below what the narrative requires, and any hesitation in Huang’s voice on hyperscaler commitment could trigger a category-wide repricing of every AI infrastructure stock simultaneously.

What to watch: The call is at 5 PM EST. Skip the headline number tomorrow morning. Read the transcript. The signal is in how Huang talks about the next two quarters: the words he chooses, what he answers directly, and what he deflects.

- Nvidia earnings report collides with Wall Street skepticism over AI spending — CNBC

- Feb. 25 Will Be a Huge Day for Nvidia — Motley Fool

- Prediction Markets Are 95% Sure Nvidia Will Beat Earnings — Motley Fool

- AMD and Meta Announce 6 Gigawatt GPU Partnership — AMD Official

- NVIDIA Q4 FY2026 earnings preview — S&P Global

- Nvidia Earnings, AI Spending Volatility, Huang — Israel Hayom

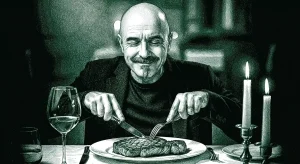

Altman called the critics insane. He’d just deleted the only word that ever cost OpenAI anything.

Sam Altman was in New Delhi for the India AI Impact Summit. A reporter asked about AI’s water consumption. He didn’t engage with the numbers. He called them “completely untrue, totally insane” with “no connection to reality,” then compared training an AI model to raising a child: “It also takes a lot of energy to train a human. It takes like 20 years of life, and all the food you eat before that time, before you get smart.”

The specific water-per-query figures that circulated online were overstated. On that narrow point, Altman has ground. But there’s a difference between correcting a bad number and calling critics insane. One is a rebuttal. The other is a posture. And the posture is hard to square with what OpenAI filed the same week.

OpenAI completed its for-profit restructuring this month. The company took $6.6 billion in new funding last year and $40 billion from SoftBank, contingent on becoming a more traditional for-profit entity with profit-sharing investors on the board. The mission statement changed as part of that process. New version: “ensure that artificial general intelligence benefits all of humanity.” Every previous version, in every IRS filing since the company was founded, read: “ensure that artificial general intelligence safely benefits all of humanity.” Fortune counted six mission changes in nine years. This is the sixth. The word moved from a noun in the founding commitment to an adverb buried in a secondary clause. Safe AGI is still the plan. Just not the commitment.

OpenAI’s response is that the rephrasing serves the same goal. Maybe. But “safely” was the only word in that document that created operational friction with profit maximization. Its removal, timed precisely to the moment investors with direct profit stakes joined the board, isn’t subtle. It’s a document. You can read it.

Altman spent the rest of the week fighting on multiple fronts. He called Elon Musk’s space data center idea “ridiculous” on thermal and repair cost grounds. He posted on X that OpenAI’s internal goals include an automated research intern by September 2026 and a fully autonomous AI researcher by March 2028. He called for global AI regulation “similar to nuclear safeguards.” Each statement is a headline. Together, they’re what it looks like when a CEO is trying to reestablish control of a narrative by generating new ones. I’ve watched this pattern through enough product cycles to recognize it. You don’t fight on five fronts because you’re winning.

Enterprise buyers signed OpenAI contracts when “safely” was still in the mission. They asked for safety representations. They got them. The mission change doesn’t void those contracts. It changes the context in which every future product decision will be made. A board that receives a direct share of profits, operating from a founding document that no longer names safety as the constraint, will make different calls than the board that signed those contracts. Maybe slightly different. Maybe significantly. The point is that the document that used to slow that down is gone.

The tell: Altman went loud and aggressive defending the water story. He said nothing about the mission statement publicly at all. When a company is that loud about one thing and completely silent about another, you already know which one matters more.

- Sam Altman defends AI resource usage: Water concerns ‘fake’ — CNBC

- Sam Altman gets defensive about AI’s electricity usage — Fortune

- OpenAI changed its mission statement 6 times in 9 years, removing ‘safely’ — Fortune

- OpenAI has deleted the word ‘safely’ from its mission — The Conversation

- Sam Altman on X: AI intern by September 2026 — X

- OpenAI’s Week of Damage Control — WebProNews

THE BOTTOM LINE

Karpathy told you he’s behind. Jensen will tell you tonight where the infrastructure bet actually stands. Altman told you where OpenAI is headed by what he chose not to say.

- Read the NVIDIA transcript tomorrow, not the headline. The number will be fine. The language around the next two quarters is what tells you whether the AI capex story holds or starts to bend.

- Find the person on your engineering team who owns agent mastery. Not the one who has the tool. The one who has put in the hours and knows what they don’t know yet. That person is a structural advantage right now. If you can’t name them, you have your answer.

- Pull your OpenAI contracts before the week is out. Find the safety and responsible development representations. Understand what’s still operative and what changed when the mission did.

The most honest person in AI this week was the one who admitted he’s still learning. Everyone else was defending something.

PROFILE

Boris Cherny

Boris Cherny was born in Odessa, Ukraine, emigrated to the U.S. in 1995, and graduated from UC San Diego. His grandfather was one of the first programmers in the USSR, working with punch cards. Cherny spent years at Meta as a Principal Software Engineer before joining Anthropic in September 2024. Six weeks after arriving, he shipped the first version of Claude Code: a command-line prototype he built for himself, not for the market. It was never supposed to be a product. Then other people started using it.

Track record: Claude Code hit $1 billion in annualized revenue in six months. It now accounts for more than half of all enterprise spending on Anthropic. Cherny personally ships 300 pull requests a month running five Claude agents in parallel, and writes 100% of his code through the tool he built. That’s not a marketing claim. It’s a workflow documented in enough detail that developers have built entire sites reverse-engineering it. What he got wrong early: the first versions built against Claude 3 didn’t work well enough to ship broadly. Cherny has been candid that the product only came together when the underlying models caught up to what he’d designed for. He built six months ahead of the model. He was right to.

Why we’re watching now: The gap Karpathy named this week, the new abstraction layer that even he hasn’t mastered, is exactly what Cherny built Claude Code to close. The person Cherny’s product is most directly helping is the category of developer who feels most behind. Enterprise teams that haven’t figured out agent orchestration yet are, functionally, the product’s entire addressable market right now. At $2.5 billion in run-rate revenue and growing, that market is enormous.

“I run 5 Claudes in parallel in my terminal. I number my tabs 1-5, and use system notifications to know when a Claude needs input.” — Boris Cherny, workflow post, January 2026

TRACKING — WHAT CEOS SHOULD BE WATCHING

- Anthropic has until Friday to hand over Claude or lose the Pentagon — Axios

- Google’s Gemini just took the “best reasoning model” crown from Claude — TechCrunch

- 78 AI bills in 27 states. Your vendors can’t answer the compliance question yet — Troutman Privacy

- OpenAI is now a for-profit company with profit-seeking investors on the board — Fortune

KEY PEOPLE & COMPANIES

| NAME | ROLE | COMPANY | LINK |

|---|---|---|---|

| Andrej Karpathy | Independent researcher | karpathy.ai | @karpathy |

| Jensen Huang | CEO | NVIDIA | @jensenhuang |

| Boris Cherny | Creator, Head of Claude Code | Anthropic | @bcherny |

| Sam Altman | CEO | OpenAI | @sama |

| Lisa Su | CEO | AMD | @LisaSu |

| Dario Amodei | CEO | Anthropic | @DarioAmodei |

| Pete Hegseth | Secretary of Defense | U.S. DoD | DoD |

SOURCES

- Andrej Karpathy: “I’ve never felt this much behind” — X

- Karpathy’s Thread Signals AI-Driven Development Breakpoint — Futurum

- Even AI Experts Feel Behind — Medium

- Nvidia earnings report collides with Wall Street skepticism — CNBC

- Feb. 25 Will Be a Huge Day for Nvidia — Motley Fool

- Prediction Markets Are 95% Sure Nvidia Will Beat Earnings — Motley Fool

- AMD and Meta Announce 6 Gigawatt GPU Partnership — AMD Official

- NVIDIA Q4 FY2026 earnings preview — S&P Global

- Nvidia Earnings, AI Spending Volatility, Huang — Israel Hayom

- Sam Altman defends AI resource usage: Water concerns ‘fake’ — CNBC

- Sam Altman gets defensive about AI’s electricity usage — Fortune

- OpenAI changed its mission statement 6 times, removed ‘safely’ — Fortune

- OpenAI has deleted the word ‘safely’ from its mission — The Conversation

- Sam Altman on X: AI intern by September 2026 — X

- OpenAI’s Week of Damage Control — WebProNews

- Hegseth gives Anthropic until Friday to back down — Axios

- Google’s Gemini 3.1 Pro has record benchmark scores — TechCrunch

- Proposed State AI Law Update: February 23, 2026 — Troutman Privacy

- Claude Code gives Anthropic its viral moment — Fortune

- Head of Claude Code: What happens after coding is solved — Lenny’s Newsletter

- The creator of Claude Code just revealed his workflow — VentureBeat

- Claude Code hits $1B as developers ditch ChatGPT — TechBuzz

Compiled from 22 sources across news sites, X threads, and company announcements. Cross-referenced with thematic analysis and edited by Anthony Batt, Harry DeMott and CO/AI’s team with 30+ years of executive technology leadership.