Signal/Noise: The Invisible War for Your Intent

Signal/Noise: The Invisible War for Your Intent

2025-12-30

As AI’s generative capabilities become a commodity, the real battle shifts from creating content to capturing and owning the user’s context and intent. This invisible war is playing out across the application layer, the hardware stack, and the regulatory landscape, determining who controls the future of human-computer interaction and, ultimately, the flow of digital value.

The ‘Agentic Layer’ vs. The ‘Contextual OS’: Who Owns Your Digital Butler?

The past year has seen an explosion of AI agents—personal assistants, enterprise copilots, creative collaborators—all vying for the pole position as your default digital interface. Google’s rumored ‘Project Chimera’ (an always-on, multimodal agent designed to anticipate needs across their ecosystem) and OpenAI’s aggressive push to embed ‘Agentic Assistants’ directly into major SaaS platforms like Salesforce and Adobe aren’t just product announcements; they are strategic land grabs for the ultimate lock-in mechanism: owning the user’s intent. The commodity trap for base models is rapidly approaching; the real differentiator isn’t what an AI can generate, but how deeply it understands and acts upon your unique context, preferences, and goals across every application.

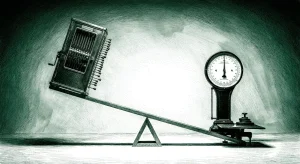

This isn’t about building a better chatbot; it’s about building the operating system for your digital life. Companies are pouring billions into collecting and processing ‘context data’—your calendar, emails, browsing history, even biometric cues—to feed these agents. The promise is hyper-personalization; the reality is a brutal fight for who controls the ‘master agent’ that orchestrates all others. The winner here gets to mediate every digital interaction, becoming the ultimate toll collector on the information superhighway. The losers will be relegated to providing commoditized capabilities that are merely integrated, rather than owning the user relationship. The focus isn’t on creating new value, but on capturing existing value by becoming the unavoidable intermediary. This is a platform play of the highest order, where the platform isn’t an app store, but a neural network that understands you better than you understand yourself.

Edge AI’s Double-Edged Sword: Decentralization Rhetoric Meets Concentrated Power

The narrative around ‘Edge AI’ has largely been one of decentralization, privacy, and bringing intelligence closer to the data source. Nvidia’s latest ‘inference-on-a-chip’ series, designed for everything from smart appliances to autonomous drones, and Apple’s rumored ‘Neural Engine Pro’ for on-device multimodal processing, are being lauded as steps towards a more distributed AI future. But beneath the surface, a different game is being played. While compute moves closer to the user, the control over that compute and the models running on it is consolidating.

This isn’t just about hardware sales; it’s about establishing new infrastructure chokepoints. Who provides the optimized compilers, the development frameworks, and the secure update mechanisms for these edge devices? The same giants who dominate cloud AI are now extending their tendrils to the edge, creating a new form of lock-in. Your smart home AI might run locally, but its core intelligence, updates, and interoperability protocols will likely be dictated by a handful of players. Furthermore, the massive investment required to design and manufacture these specialized chips means only a few companies can compete, creating an oligopoly at the hardware layer. The promise of ‘sovereign AI’ on national or even personal devices is a mirage if the underlying technology stack remains controlled by external powers. This is a classic infrastructure play, where the ‘picks and shovels’ are becoming highly specialized, expensive, and controlled, effectively creating new bottlenecks even as compute power seemingly proliferates.

The Regulatory Squeeze: Data Provenance and the AI Black Box

After years of vague pronouncements, regulators are finally getting serious about AI’s impact, and the latest directives from the EU’s AI Act (now in implementation phase) and the US’s new ‘AI Data Integrity & Transparency Framework’ reveal a clear strategic shift. The focus is no longer just on ‘safe’ AI, but on transparent AI, particularly concerning data provenance and model explainability. Companies leveraging large language models for public-facing applications are now facing strict mandates to document training data sources, disclose synthetic content, and provide mechanisms for ‘right to explanation’ regarding AI-driven decisions.

This isn’t just about compliance; it’s a fundamental re-evaluation of the economics of AI. The ‘move fast and break things’ approach to data scraping and model opacity is rapidly becoming untenable. The cost of compliance—auditing data pipelines, developing explainability tools, and managing ‘digital provenance’ for every piece of AI-generated content—will be immense. This regulatory pressure disproportionately impacts smaller players who lack the resources to meet these demands, inadvertently favoring large incumbents who can absorb these costs and integrate them into their ‘trust’ narratives. Furthermore, the push for data provenance creates a new competitive battleground: who has the cleanest, best-documented, and ethically sourced data sets? This elevates the value of ‘gold-standard’ data and could lead to a ‘data cartel’ where access to high-quality, compliant data becomes a significant barrier to entry. The ‘black box’ era of AI is ending, but its replacement isn might not be a level playing field.

Questions

- If AI agents become the default interface, what happens to the concept of ‘app stores’ and the existing power dynamics of mobile ecosystems?

- As edge AI proliferates, will we see new forms of ‘AI-powered monopolies’ emerge, controlling not just software but the very hardware that runs our digital lives?

- With increasing regulatory pressure on data provenance, will the next AI gold rush be for ethically sourced, ‘clean’ datasets, and who will own them?