Signal/Noise

Signal/Noise

2025-01-02

The AI industry is entering its infrastructure maturity phase, where the real money shifts from building models to controlling access and distribution. While everyone watches the feature wars, the actual strategic battle is about who gets to sit between AI capabilities and end users—and OpenAI just made a bold play for permanent position as the platform layer.

The Platform Play Disguised as API Improvements

OpenAI’s latest API updates aren’t just technical improvements—they’re a calculated move to cement themselves as the middleware layer of AI. By offering structured outputs, function calling, and batch processing at scale, they’re not competing with applications anymore; they’re making it easier for everyone else to build on top of them while ensuring they remain the critical dependency. This is classic platform strategy: make yourself so useful that switching becomes prohibitively expensive, then slowly increase your take rate. The real genius isn’t in the features themselves but in how they create lock-in through developer convenience. Every startup that integrates these APIs deeply into their architecture becomes a long-term revenue stream that gets harder to replace over time. Meanwhile, competitors like Anthropic and Google are still playing the model quality game—important, but ultimately commoditizable. OpenAI is building the toll road that everyone will have to use regardless of whose model is technically superior this quarter.

The Enterprise Context Capture War

Every major AI announcement now includes some variation of ‘enterprise-ready’ features, but what they’re really fighting for is context lock-in. The company that becomes the repository for your company’s institutional knowledge—your documents, processes, decision patterns—doesn’t just have a product, they have your digital brain. This explains why Microsoft is pushing Copilot deeper into Office, why Google is integrating Gemini across Workspace, and why startups are racing to build vertical-specific solutions. The winner isn’t necessarily who has the smartest AI, but who accumulates the most irreplaceable context about how organizations actually work. Once your AI assistant knows your company’s jargon, your team’s preferences, and your industry’s unwritten rules, switching becomes an organizational trauma, not just a technical decision. This is why we’re seeing such aggressive pricing from incumbents—they’re not just competing for market share, they’re competing for permanent residency in corporate workflows.

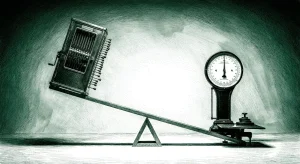

The Commoditization Cliff Everyone’s Ignoring

While AI companies race to differentiate through features, they’re accelerating toward their own commoditization. When every model can code, write, and reason at roughly human-level performance, the sustainable advantage shifts to distribution and switching costs, not capabilities. This explains the desperate scramble for vertical integration—everyone realizes that pure-play AI model companies are facing the same fate as chip manufacturers in the 1990s: essential but ultimately low-margin suppliers to whoever controls the customer relationship. The smart money is already moving: instead of funding the nth coding assistant or writing tool, investors are backing companies that use AI as a component of a broader value proposition. The future winners will be companies that solve complete business problems where AI happens to be a critical ingredient, not companies that sell AI as the product itself. We’re about to watch a brutal consolidation where only the companies with genuine network effects, proprietary data, or irreplaceable customer relationships survive the commodity trap.

Questions

- If AI models become commodities, what prevents the entire industry from collapsing into a race to zero margins?

- Which companies are building genuine moats versus just riding the current hype cycle?

- How do we avoid a future where three companies control all access to artificial intelligence?