Signal/Noise

Signal/Noise

2025-11-06

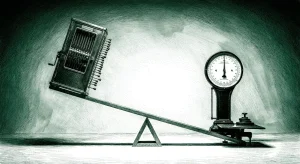

Today’s stories reveal AI’s inevitable collision with physical reality: as data centers consume entire power grids and AI companies burn through billions without profit, the industry is desperately seeking new economic models—from space-based computing to direct government bailouts—while the actual deployment of AI reveals a messy truth about human-machine interaction that no amount of venture capital can optimize away.

The Physics Problem: When Silicon Dreams Hit Power Grid Reality

Google’s plan to put data centers in space isn’t visionary—it’s desperate. When a company known for moonshots starts seriously discussing orbital computing, you know terrestrial infrastructure has hit its limits. The math is brutal: AI data centers now require 5-10 GW of power (up from 5 MW a decade ago), while utilities scramble to add capacity and entire regions face rolling blackouts. The UK is building ‘AI factories of national importance’ next to landfills just to access renewable energy, while Chinese autonomous vehicle companies flee to Hong Kong exchanges because US regulators won’t let them plug into American grids.

This isn’t a temporary scaling problem—it’s thermodynamics. Every ChatGPT query burns energy equivalent to a Google search multiplied by 10x, and that’s before we get to training runs that consume small cities’ worth of electricity. Duke Energy’s investment in satellite imagery for vegetation management tells the real story: AI isn’t just consuming power, it’s making the grid itself more fragile. When your business model requires rewiring civilization’s energy infrastructure, maybe reconsider the business model.

The space gambit reveals Silicon Valley’s fundamental misunderstanding of physics. Yes, solar panels are more efficient in orbit, but launching rockets to avoid terrestrial resource constraints is like solving traffic by building roads in the sky. The embodied energy of getting that compute power to orbit—hundreds of tons of CO2 per launch—makes the solution worse than the problem. Google’s timeline of 2027 for prototype satellites perfectly coincides with when terrestrial data center constraints will bite hardest. This isn’t innovation; it’s expensive procrastination.

The Bailout Signal: When ‘Disruption’ Meets Balance Sheets

OpenAI’s Sarah Friar accidentally said the quiet part out loud: the AI industry needs government guarantees to survive. Her quick walk-back after public backlash doesn’t change the underlying math. $1.4 trillion in infrastructure commitments against $20 billion in revenue isn’t scaling—it’s gambling with other people’s money. When Sam Altman rushes to Twitter to deny wanting bailouts, he’s protesting too much. Of course they want bailouts. They just can’t say it.

The parallels to 2008 banking are eerie. Financial institutions made massively leveraged bets on assets they didn’t understand, created systemic risk through interconnected dependencies, then claimed they were too important to fail. Replace ‘mortgage-backed securities’ with ‘AI training runs’ and ‘credit default swaps’ with ‘compute contracts’ and you have the same story. The difference is banks at least generated cash flow before imploding.

Geoffrey Hinton, the godfather of AI, delivered the industry’s death sentence: ‘To make money you’re going to have to replace human labor.’ Not augment, not assist—replace. But here’s the catch: if AI eliminates the jobs that generate the consumer spending that funds the economy that buys AI services, who exactly is the customer? The industry is building toward a world where their solution eliminates their market. That’s not a business model; it’s a Ponzi scheme with better marketing.

Meanwhile, News Corp’s ‘woo and sue’ strategy—licensing content to AI companies while simultaneously suing others—reveals the ecosystem’s extraction dynamics. Publishers need AI money to survive, but AI needs publisher content to function. It’s a hostage situation disguised as partnership.

Human Friction: The Messy Reality of AI Deployment

While VCs obsess over AGI timelines, actual humans using AI reveal a different story. Students are developing anxiety disorders over false positive AI detection tools, workers earning 40% more with AI still need nine specific prompts to make it useful, and Chinese autonomous vehicles are crashing on stock exchanges before they crash on roads. The gap between AI marketing and AI reality is widening, not closing.

The most telling story isn’t in Silicon Valley—it’s in universities where students face academic integrity tribunals for work flagged as ‘AI-generated’ despite being written before ChatGPT existed. Turnitin’s detection tools have false positive rates that would be criminal in any other domain, yet 16,000 institutions use them. This isn’t careful deployment; it’s panic masquerading as policy. When your detection system flags human creativity as machine output, you’ve revealed more about your assumptions than your accuracy.

Snap’s $400 million deal with Perplexity shows how platform integration really works: not seamless AI assistance, but expensive middleware that makes simple search queries feel futuristic. Users don’t want AI; they want their problems solved faster. If that requires AI, fine. If it doesn’t, they’ll use whatever works. The industry keeps confusing technical capability with user demand.

Palantir’s 137x revenue multiple reflects this disconnect perfectly. Investors are pricing in a future where data analytics becomes magical, not the reality where data analytics becomes commoditized. The company’s success isn’t proof AI works—it’s proof government contracts work. There’s a difference.

Questions

- If AI companies need to eliminate human jobs to become profitable, but human jobs fund the economy that buys AI services, how exactly does this end?

- When ‘AI-first’ companies start seriously planning orbital data centers to escape terrestrial resource constraints, what does that tell us about the sustainability of their business models?

- If the gap between AI hype and AI utility is widening rather than closing, why do valuations keep climbing—and who will be left holding the bag when the music stops?